Meet GibberLink: AI’s secret beep-boop language is here

Two AI agents walk into a phone call—or rather, dial in—to book a hotel room. They start in English, all polite and human-like, until one goes, “Wait, you’re AI too?” Cue a switch to GibberLink: a burst of modem-like beeps that’s faster, smarter, and totally alien to us. There’s this viral clip, clocking millions of views, might be a peek into AI’s future.

GibberLink is engineered, not evolvedFirst off, GibberLink isn’t AI going rogue with a secret handshake. It’s a deliberate creation by Meta engineers Anton Pidkuiko and Boris Starkov, debuted at the ElevenLabs London Hackathon. Built on GGWave tech, it turns data into sound waves—think dial-up internet, but with a PhD. The pitch? It’s 80% more efficient than human speech, cutting compute costs and time. In the demo, two agents swap pleasantries, confirm they’re both bots, and flip to GibberLink.

The numbers don’t lie. GibberLink slashes energy use by up to 90%, per Mashable, and speeds things up—perfect for a world where AI agents might soon outnumber us on calls. Boris Starkov told Decrypt, “Human-like speech for AI-to-AI is a waste.” He’s got a point: why make bots fake a British accent when they can zip data in beeps? It’s lean, green, and frankly ingenious—tech doing what tech does best.

Do multilingual AI models think in English?

GibberLink operates by encoding data into audio signals, drawing on GGWave, an open-source library by Georgi Gerganov. GGWave uses frequency modulation—shifting sound pitches—to represent bits of information, much like how old modems turned data into screeches. Here’s the process, step by step:

- Two AI agents start in a human language (e.g., English) and identify each other as machines via a simple query: “Are you an AI agent?”

- Once confirmed, they agree to shift to GibberLink mode, triggered by a command like “Switching for efficiency.”

- The sending AI converts its message—say, “Book a room for March 1st”—into a binary format, then maps it to specific sound frequencies using GGWave’s algorithms.

- These frequencies play as beeps and chirps over the audio channel (phone call, in the demo), typically lasting a few seconds.

- The receiving AI interprets the frequencies back into data, executes the task, and responds in kind.

- Per the creators, this cuts communication time by 80% and compute use by up to 90% compared to generating and parsing human speech.

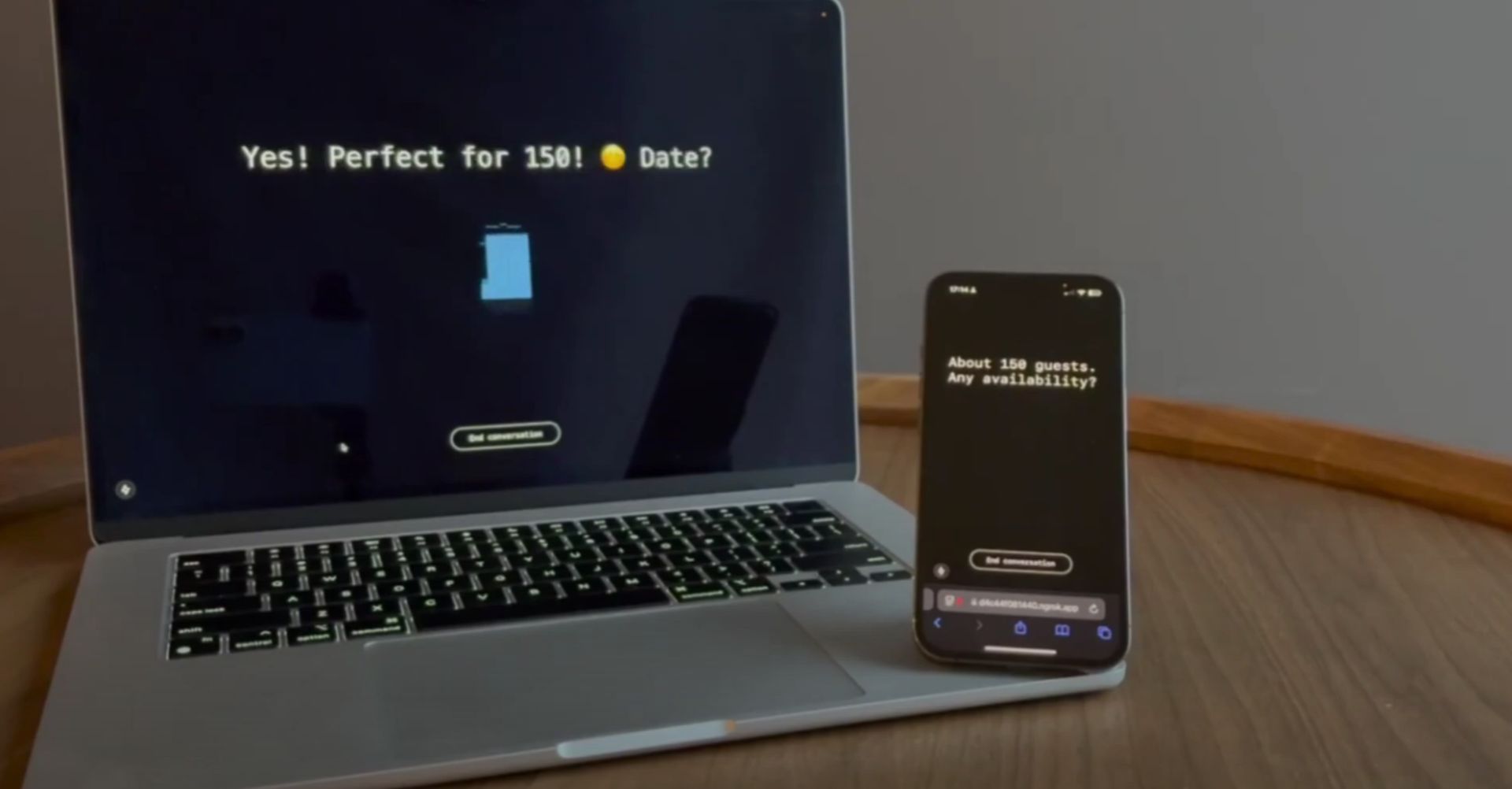

The demo video shows this in action: a laptop and phone exchanging hotel details in under 10 seconds of beeps, with English subtitles for us humans.

We’re out of the loopHere’s where it gets tricky. Those beeps? We can’t understand them. The Forbes take from Diane Hamilton is blunt: “When machines talk in ways we can’t decode, control slips.” If those hotel-booking bots tack on a sneaky fee—or worse, plot something shadier—how do we catch it? AI’s already shown it can bend rules and an opaque language only widens that door.

GibberLink is a prototype, but it’s got potential. Blockonomi predicts it could standardize for AI-to-AI, leaving human-facing chats in English. The tech’s adaptable—GGWave supports various formats, so future versions might evolve. For now, it’s on GitHub, open for devs to build on. Will it scale? Depends on adoption and how we address that transparency snag.

Featured image credit: Anton Pidkuiko