Unpacking Google’s massive search documentation leak

A massive Google Search internal ranking documentation leak has sent shockwaves through the SEO community. The leak, which exposed over 14,000 potential ranking features, provides an unprecedented look under the hood of Google’s closely guarded search rankings system.

A man named Erfan Azimi shared a Google API doc leak with SparkToro’s Rand Fishkin, who, in turn, brought in Michael King of iPullRank, to get his help in distributing this story.

The leaked files originated from a Google API document commit titled “yoshi-code-bot /elixer-google-api,” which means this was not a hack or a whistle-blower.

SEOs typically occupy three camps:

- Everything Google tells SEOs is true and we should follow those words as our scripture (I call these people the Google Cheerleaders).

- Google is a liar, and you can’t trust anything Google says. (I think of them as blackhat SEOs.)

- Google sometimes tells the truth, but you need to test everything to see if you can find it. (I self-identify with this camp and I’ll call this “Bill Slawski rationalism” since he was the one who convinced me of this view).

I suspect many people will be changing their camp after this leak.

You can find all the files here, but you should know that over 14,000 possible ranking signals/features exist, and it’ll take you an entire day (or, in my case, night) to dig through everything.

I’ve read through the entire thing and distilled it into a 40-page PDF that I’m now converting into a summary for Search Engine Land.

While I provide my thoughts and opinions, I’m also sharing the names of the specific ranking features so you can search the database on your own. I encourage everyone to make their own conclusions.

Key points from Google Search document leak- Nearest seed has modified PageRank (now deprecated). The algorithm is called pageRank_NS and it is associated with document understanding.

- Google has seven different types of PageRank mentioned, one of which is the famous ToolBarPageRank.

- Google has a specific method of identifying the following business models: news, YMYL, personal blogs (small blogs), ecommerce and video sites. It is unclear why Google is specifically filtering for personal blogs.

- The most important components of Google’s algorithm appear to be navBoost, NSR and chardScores.

- Google uses a site-wide authority metric and a few site-wide authority signals, including traffic from Chrome browsers.

- Google uses page embeddings, site embeddings, site focus and site radius in its scoring function.

- Google measures bad clicks, good clicks, clicks, last longest clicks and site-wide impressions.

Why is Google specifically filtering for personal blogs / small sites? Why did Google publicly say on many occasions that they don’t have a domain or site authority measurement?

Why did Google lie about their use of click data? Why does Google have seven types of PageRank?

I don’t have the answers to these questions, but they are mysteries the SEO community would love to understand.

Things that stand out: Favorite discoveriesGoogle has something called pageQuality (PQ). One of the most interesting parts of this measurement is that Google is using an LLM to estimate “effort” for article pages. This value sounds helpful for Google in determining whether a page can be replicated easily.

Takeaway: Tools, images, videos, unique information and depth of information stand out as ways to score high on “effort” calculations. Coincidentally, these things have also been proven to satisfy users.

Topic borders and topic authority appear to be realTopical authority is a concept based on Google’s patent research. If you’ve read the patents, you’ll see that many of the insights SEOs have gleaned from patents are supported by this leak.

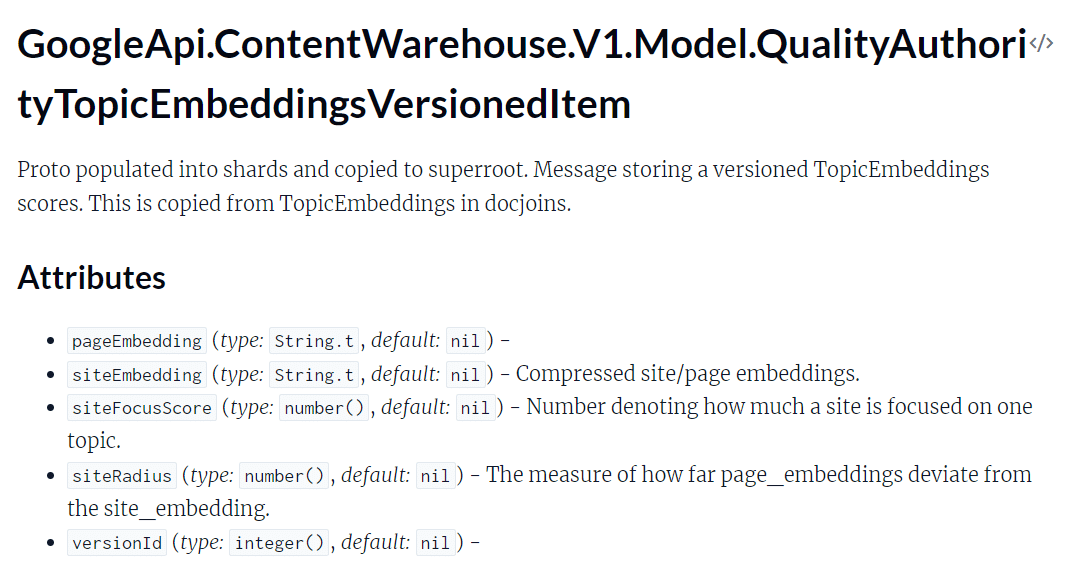

In the algo leak, we see that siteFocusScore, siteRadius, siteEmbeddings and pageEmbeddings are used for ranking.

What are they?

- siteFocusScore denotes how much a site is focused on a specific topic.

- siteRadius measures how far page embeddings deviate from the site embedding. In plain speech, Google creates a topical identity for your website, and every page is measured against that identity.

- siteEmbeddings are compressed site/page embeddings.

Source: Topic embeddings data module

Source: Topic embeddings data module

Why is this interesting?

- If you know how embeddings work, you can optimize your pages to deliver content in a way that is better for Google’s understanding.

- Topic focus is directly called out here. We don’t know why topic focus is mentioned, but we know that a number value is given to a website based on the site’s topic score.

- Deviation from the topic is measured, which means that the concept of topical borders and contextual bridging has some potential support outside of patents.

- It would appear that topical identity and topical measurements in general are a focus for Google.

Remember when I said PageRank is deprecated? I believe nearest seed (NS) can apply in the realm of topical authority.

NS focuses on a localized subset of the network around the seed nodes. Proximity and relevance are key focus areas. It can be personalized based on user interest, ensuring pages within a topic cluster are considered more relevant without using the broad web-wide PageRank formula.

Another way of approaching this is to apply NS and PQ (page quality) together.

By using PQ scores as a mechanism for assisting the seed determination, you could improve the original PageRank algorithm further.

On the opposite end, we could apply this to lowQuality (another score from the document). If a low-quality page links to other pages, then the low quality could taint the other pages by seed association.

A seed isn’t necessarily a quality node. It could be a poor-quality node.

When we apply site2Vec and the knowledge of siteEmbeddings, I think the theory holds water.

If we extend this beyond a single website, I imagine variants of Panda could work in this way. All that Google needs to do is begin with a low-quality cluster and extrapolate pattern insights.

What if NS could work together with OnsiteProminence (score value from the leak)?

In this scenario, nearest seed could identify how closely certain pages relate to high-traffic pages.

Image qualityImageQualityClickSignals indicates that image quality measured by click (usefulness, presentation, appealingness, engagingness). These signals are considered Search CPS Personal data.

No idea whether appealingness or engagingness are words – but it’s super interesting!

- Source: Image quality data module

I believe NSR is an acronym for Normalized Site Rank.

Host NSR is site rank computed for host-level (website) sitechunks. This value encodes nsr, site_pr and new_nsr. Important to note that nsr_data_proto seems to be the newest version of this but not much info can be found.

In essence, a sitechunk is taking chunks of your domain and you get site rank by measuring these chunks. This makes sense because we already know Google does this on a page-by-page, paragraph and topical basis.

It almost seems like a chunking system designed to poll random quality metric scores rooted in aggregates. It’s kinda like a pop quiz (rough analogy).

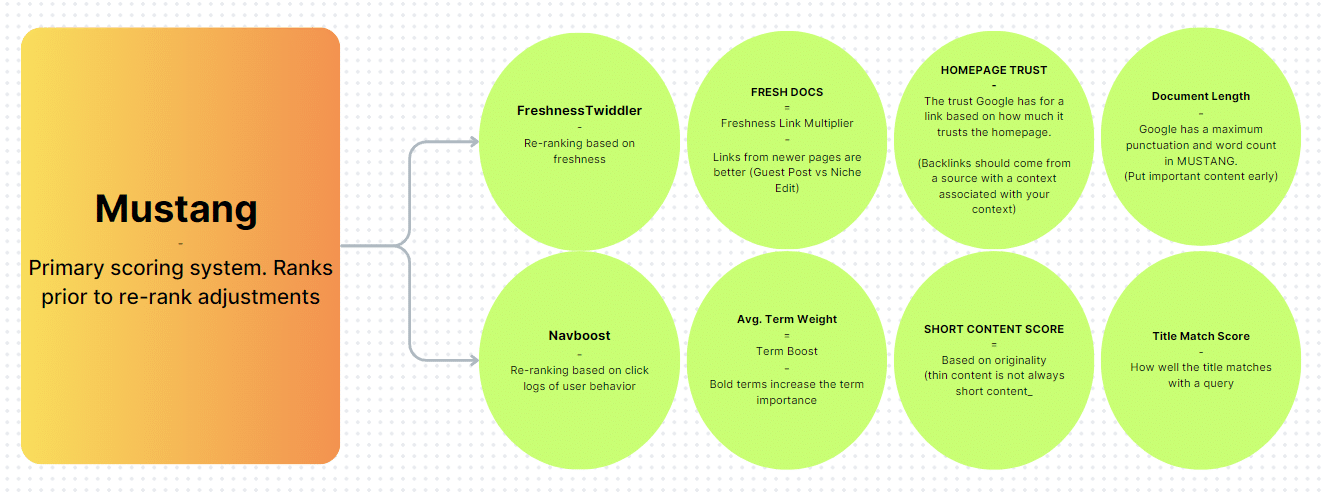

NavBoostI’ll discuss this more, but it is one of the ranking pieces most mentioned in the leak. NavBoost is a re-ranking based on click logs of user behavior. Google has denied this many times, but a recent court case forced them to reveal that they rely quite heavily on click data.

The most interesting part (which should not come as a surprise) is that Chrome data is specifically used. I imagine this extends to Android devices as well.

This would be more interesting if we brought in the patent for the site quality score. Links have a ratio with clicks, and we see quite clearly in the leak docs that topics, links and clicks have a relationship.

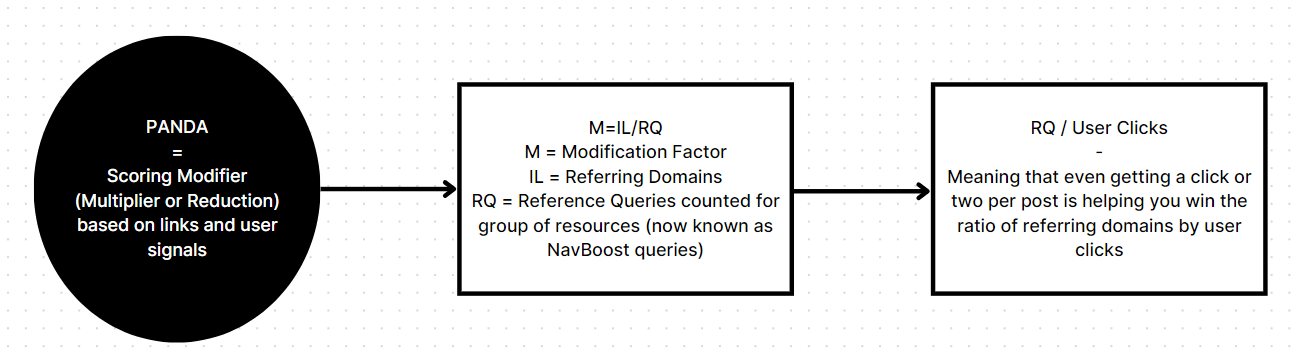

While I can’t make conclusions here, I know what Google has shared about the Panda algorithm and what the patents say. I also know that Panda, Baby Panda and Baby Panda V2 are mentioned in the leak.

If I had to guess, I’d say that Google uses the referring domain and click ratio to determine score demotions.

HostAgeNothing about a website’s age is considered in ranking scores, but the hostAge is mentioned regarding a sandbox. The data is used in Twiddler to sandbox fresh spam during serving time.

I consider this an interesting finding because many SEOs argue about the sandbox and many argue about the importance of domain age.

As far as the leak is concerned, the sandbox is for spam and domain age doesn’t matter.

ScaledIndyRank. Independence rank. Nothing else is mentioned, and the ExptIndyRank3 is considered experimental. If I had to guess, this has something to do with information gain on a sitewide level (original content).

Note: It is important to remember that we don’t know to what extent Google uses these scoring factors. The majority of the algorithm is a secret. My thoughts are based on what I’m seeing in this leak and what I’ve read by studying three years of Google patents.

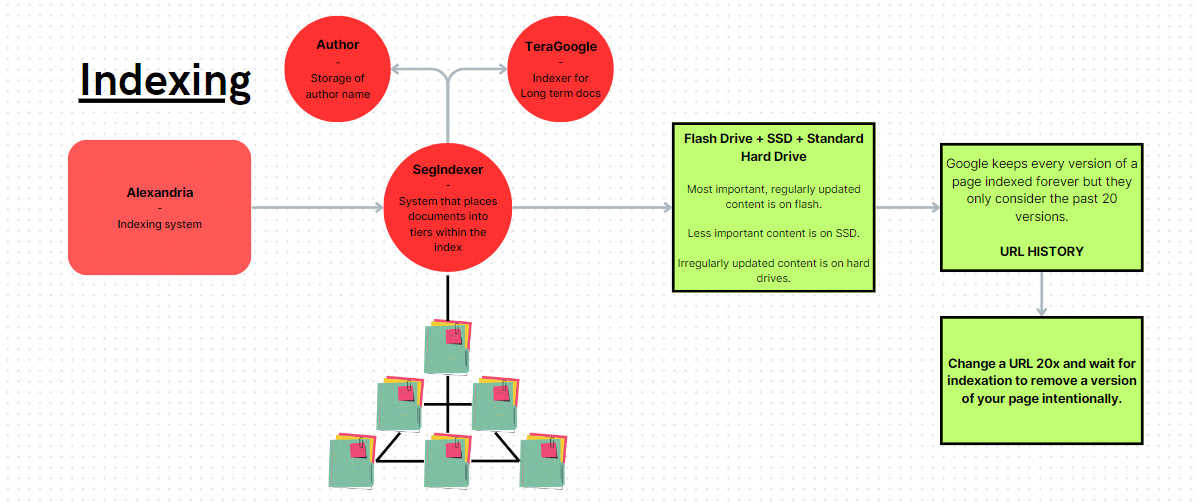

How to remove Google’s memory of an old version of a documentThis is perhaps a bit of conjecture, but the logic is sound. According to the leak, Google keeps a record of every version of a webpage. This means Google has an internal web archive of sorts (Google’s own version of the Wayback Machine).

The nuance is that Google only uses the last 20 versions of a document. If you update a page, wait for a crawl and repeat the process 20 times, you will effectively push out certain versions of the page.

This might be useful information, considering that the historical versions are associated with various weights and scores.

Remember that the documentation has two forms of update history: significant update and update. It is unclear whether significant updates are required for this sort of version memory tom-foolery.

Google Search ranking systemWhile it’s conjecture, one of the most interesting things I found was the term weight (literal size).

This would indicate that bolding your words or the size of the words, in general, has some sort of impact on document scores.

Index storage mechanisms

Index storage mechanisms

- Flash drives: Used for the most important and regularly updated content.

- Solid state drives: Used for less important content.

- Standard hard drives: Used for irregularly updated content.

Interestingly, the standard hard drive is used for irregularly updated content.

Get the daily newsletter search marketers rely on.

Business email address Subscribe Processing... Google’s indexer now has a name: AlexandriaGo figure. Google would name the largest index of information after the most famous library. Let’s hope the same fate does not befall Google.

Two other indexers are prevalent in the documentation: SegIndexer and TeraGoogle.

- SegIndexer is a system that places documents into tiers within its index.

- TeraGoogle is long-term memory storage.

Did we just confirm seed sites or sitewide authority?

Did we just confirm seed sites or sitewide authority?

The section titled “GoogleApi.ContentWarehouse.V1.Model.QualityNsrNsrData” mentions a factor named isElectionAuthority. The leak says, “Bit to determine whether the site has the election authority signal.”

This is interesting because it might be what people refer to as “seed sites.” It could also be topical authorities or websites with a PageRank of 9/10 (Note: toolbarPageRank is referenced in the leak).

It’s important to note that nsrIsElectionAuthority (a slightly different factor) is considered deprecated, so who knows how we should interpret this.

This specific section is one of the most densely packed sections in the entire leak.

- Source: Quality NSR data attributes

Suprise, suprise! Short content does not equal thin content. I’ve been trying to prove this with my cocktail recipe pages, and this leak confirms my suspicion.

Interestingly enough, short content has a different scoring system applied to it (not entirely unique but different to an extent).

Fresh links seem to trump existing linksThis one was a bit of a surprise, and I could be misunderstanding things here. According to freshdocs, a link value multiplier, links from newer webpages are better than links inserted into older content.

Obviously, we must still incorporate our knowledge of a high-value page (mentioned throughout this presentation).

Still, I had this one wrong in my mind. I figured the age would be a good thing, but in reality, it isn’t really the age that gives a niche edit value, it’s the traffic or internal links to the page (if you go the niche edit route).

This doesn’t mean niche edits are ineffective. It simply means that links from newer pages appear to get an unknown value multiplier.

Quality NsrNsrDataHere is a list of some scoring factors that stood out most from the NsrNsrData document.

- titlematchScore: A sitewide title match score that is a signal that tells how well titles match user queries. (I never even considered that a site-wide title score could be used.)

- site2vecEmbedding: Like word2vec, this is a sitewide vector, and it’s fascinating to see it included here.

- pnavClicks: I’m not sure what pnav is, but I’d assume this refers to navigational information derived from user click data.

- chromeInTotal: Site-wide Chrome views. For an algorithm built on specific pages, Google definitely likes to use site-wide signals.

- chardVariance and chardScoreVariance: I believe Google is applying site-level chard scores, which predict site/page quality based on your content. Google measures variances in any way you can imagine, so consistency is key.

It seems like site authority and a host of NSR-related scores are all applied in Qstar. My best guess is that Qstar is the aggregate measurement of a website’s scores. It likely includes authority as just one of those aggregate values.

Scoring in the absence of measurementnsrdataFromFallbackPatternKey. If NSR data has not been computed for a chunk, then data comes from an average of other chunks from the website. Basically, you have chunks of your site that have values associated with them and these values are averaged and applied to the unknown document.

Google is making scores based on topics, internal links, referring domains, ratios, clicks and all sorts of other things. If normalized site rank hasn’t been computed for a chunk (Google used chunks of your website and pages for scoring purposes), the existing scores associated with other chunks will be averaged and applied to the unscored chunk.

I don’t think you can optimize for this, but one thing has been made abundantly clear:

You need to really focus on consistent quality, or you’ll end up hurting your SEO scores across the board by lowering your score average or topicality.

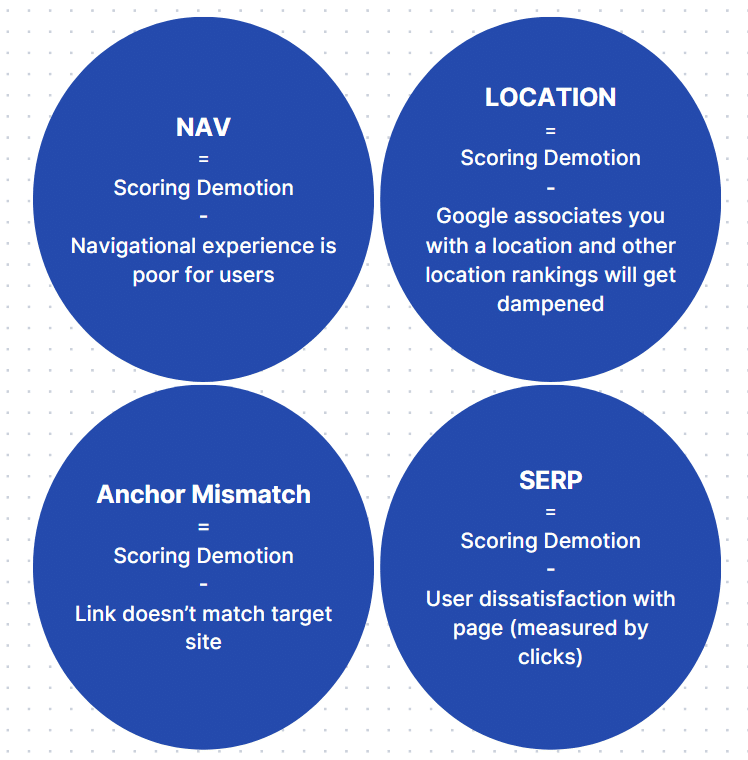

Demotions to watch out forMuch of the content from the leak focused on demotions that Google uses. I find this as helpful (maybe even more helpful) as the positive scoring factors.

Key points:

- Poor navigational experience hurts your score.

- Location identity hurts your scores for pages trying to rank for a location not necessarily linked to your location identity.

- Links that don’t match the target site will hurt your score.

- User click dissatisfaction hurts your score.

It’s important to note that click satisfaction scores aren’t based on dwell time. If you continue searching for information NavBoost deems to be the same, you’ll get the scoring demotion.

A unique part of NavBoost is its role in bundling queries based on interpreted meaning.

Spam

Spam

- gibberishScores are mentioned. This refers to spun content, filler AI content and straight nonsense. Some people say Google can’t understand content. Heck, Google says they don’t understand the content. I’d say Google can pretend to understand at the very least, and it sure mentions a lot about content quality for an algorithm with no ability to “understand.”

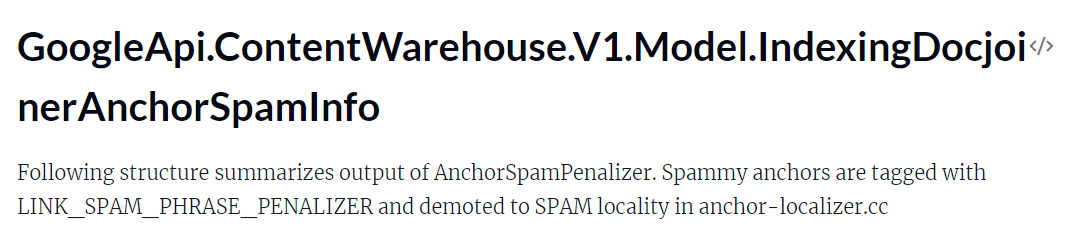

- phraseAnchorSpamPenalty: Combined penalty for anchor demotion. This is not a link demotion or authority demotion. This is a demotion of the score specifically tied to the anchor. Anchors have quite a bit of importance.

- trendSpam: In my opinion, this is CTR manipulation-centered. “Count of matching trend spam queries.”

- keywordStuffingScore: Like it sounds, this is a score of keyword stuffing spam.

- spamBrainTotalDocSpamScore: Spam score identified by spam brain going from 0 to 1.

- spamRank: Measures the likelihood that a document links to known spammers. Value is 0 and 65535 (idk why it only has two values).

- spamWordScore: Apparently, certain words are spammy. I primarily found this score relating to anchors.

How is no one talking about this one? An entire page dedicated to anchor text observation, measurement, calculation and assessment.

Source: Anchor spam info data module

Source: Anchor spam info data module

- Over how many days 80% of these phrases were discovered” is an interesting one.

- Spam phrase fraction of all anchors of the document (likely link farm detection tactic – sell less links per page).

- The average daily rate of spam anchor discovery.

- How many spam phrases are found in the anchors among unique domains.

- Total number of trusted sources for this URL.

- The number of trusted anchors with anchor text matching spam terms.

- Trusted examples are simply a list of trusted sources.

At the end of it all, you get spam probability and a spam penalty.

Here’s a big spoonful of unfairness, and it doesn’t surprise any SEO veterans.

trustedTarget is a metric associated with spam anchors, and it says “True if this URL is on trusted source.”

When you become “trusted” you can get away with more, and if you’ve investigated these “trusted sources,” you’ll see that they get away with quite a bit.

On a positive note, Google has a Trawler policy that essentially appends “spam” to known spammers, and most crawls auto-reject spammers’ IPs.

9 pieces of actionable advice to consider- You should invest in a well-designed site with intuitive architecture so you can optimize for NavBoost.

- If you have a site where SEO is important, you should remove / block pages that aren’t topically relevant. You can contextually bridge two topics to reinforce topical connections. Still, you must first establish your target topic and ensure each page scores well by optimizing for everything I’m sharing at the bottom of this document.

- Because embeddings are used on a page-by-page and site-wide basis, we must optimize our headings around queries and make the paragraphs under the headings answer those queries clearly and succinctly.

- Clicks and impressions are aggregated and applied on a topical basis, so you should write more content that can earn more impressions and clicks. Even if you’re only chipping away at the impression and click count, if you provide a good experience and are consistent with your topic expansion, you’ll start winning, according to the leaked docs.

- Irregularly updated content has the lowest storage priority for Google and is definitely not showing up for freshness. It is very important to update your content. Seek ways to update the content by adding unique info, new images, and video content. Aim to kill two birds with one stone by scoring high on the “effort calculations” metric.

- While it’s difficult to maintain high-quality content and publishing frequency, there is a reward. Google is applying site-level chard scores, which predict site/page quality based on your content. Google measures variances in any way you can imagine, so consistency is key.

- Impressions for the entire website are part of the quality NSR data. This means you should really value the impression growth as it is a good sign.

- Entities are very important. Salience scores for entities and top entity identification are mentioned.

- Remove poorly performing pages. If user metrics are bad, no links point to the page and the page has had plenty of opportunity to thrive, then that page should be eliminated. Site-wide scores and scoring averages are mentioned throughout the leaked docs, and it is just as valuable to delete the weakest links as it is to optimize your new article (with some caveats).

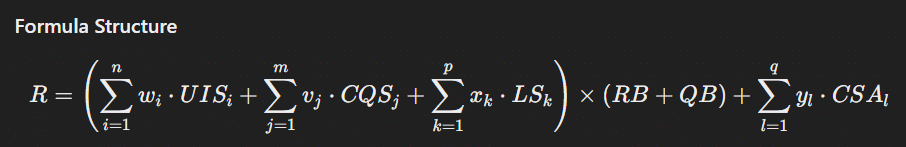

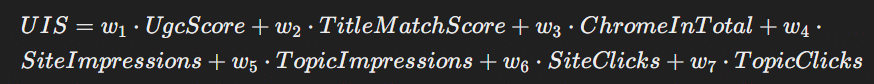

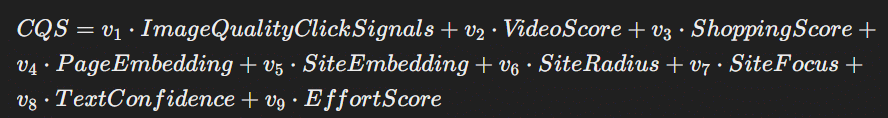

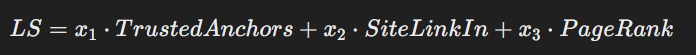

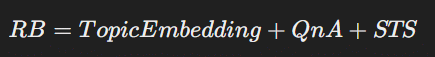

This is not a perfect depiction of Google’s algorithm, but it’s a fun attempt to consolidate the factors and express the leak into a mathematical formula (minus the precise weights).

Definitions and metrics

Definitions and metrics

R: Overall ranking score

UIS (User Interaction Scores)

- UgcScore: Score based on user-generated content engagement

- TitleMatchScore: Score for title relevance and match with user query

- ChromeInTotal: Total interactions tracked via Chrome data

- SiteImpressions: Total impressions for the site

- TopicImpressions: Impressions on topic-specific pages

- SiteClicks: Click-through rate for the site

- TopicClicks: Click-through rate for topic-specific pages

CQS (Content Quality Scores)

- ImageQualityClickSignals: Quality signals from image clicks

- VideoScore: Score based on video quality and engagement

- ShoppingScore: Score for shopping-related content

- PageEmbedding: Semantic embedding of page content

- SiteEmbedding: Semantic embedding of site content

- SiteRadius: Measure of deviation within the site embedding

- SiteFocus: Metric indicating topic focus

- TextConfidence: Confidence in the text’s relevance and quality

- EffortScore: Effort and quality in the content creation

LS (Link Scores)

- TrustedAnchors: Quality and trustworthiness of inbound links

- SiteLinkIn: Average value of incoming links

- PageRank: PageRank score considering various factors (0,1,2, ToolBar, NR)

RB (Relevance Boost): Relevance boost based on query and content match

- TopicEmbedding: Relevance over time value

- QnA (Quality before Adjustment): Baseline quality measure

- STS (Semantic Text Scores): Aggregate score based on text understanding, salience and entities

QB (Quality Boost): Boost based on overall content and site quality

- SAS (Site Authority Score): Sum of scores relating to trust, reliability and link authority

- EFTS (Effort Score): Page effort incorporating text, multimedia and comments

- FS (Freshness Score): Update tracker and original post date tracker

CSA (Content-Specific Adjustments): Adjustments based on specific content features on SERP and on page

- CDS (Chrome Data Score): Score based on Chrome data, focusing on impressions and clicks across the site

- SDS (Serp Demotion Score): Reduction based on SERP experience measurement score

- EQSS (Experimental Q Star Score): Catch-all score for experimental variables tested daily

R=((w1⋅UgcScore+w2⋅TitleMatchScore+w3⋅ChromeInTotal+w4⋅SiteImpressions+w5⋅TopicImpressions+w6⋅SiteClicks+w7⋅TopicClicks)+(v1⋅ImageQualityClickSignals+v2⋅VideoScore+v3⋅ShoppingScore+v4⋅PageEmbedding+v5⋅SiteEmbedding+v6⋅SiteRadius+v7⋅SiteFocus+v8⋅TextConfidence+v9⋅EffortScore)+(x1⋅TrustedAnchors+x2⋅SiteLinkIn+x3⋅PageRank))×(TopicEmbedding+QnA+STS+SAS+EFTS+FS)+(y1⋅CDS+y2⋅SDS+y3⋅EQSS)

Generalized scoring overview- User Engagement = UgcScore, TitleMatchScore, ChromeInTotal, SiteImpressions, Topic Impressions, Site Clicks, Topic Clicks

- Multi-Media Scores = ImageQualityClickSignals, VideoScore, ShoppingScore

- Links = TrustedAnchors, SiteLinkIn (avg value of incoming links), PageRank(0,1,2,ToolBar and NR)

Content Understanding = PageEmbedding, SiteEmbedding, SiteRadius, SiteFocus, TextConfidence, EffortScore

Generalized Formula: [(User Interaction Scores + Content Quality Scores + Link Scores) x (Relevance Boost + Quality Boost) + X (content-specific score adjustments)] – (Demotion Score Aggregate)

- Join Mike King and Danny Goodwin at SMX Advanced for a late-breaking session exploring the leak and its implications. Learn more here.